Sage Agents - Combining the wisdom of a Sage with the power of LLM-based Agents

A sage is a person who has attained wisdom and is often characterized by sound judgment and deep understanding. Sagents brings this philosophy to AI agents: building systems that don't just execute tasks, but do so with thoughtful human oversight, efficient resource management, and extensible architecture.

- Human-In-The-Loop (HITL) - Customizable permission system that pauses execution for approval on sensitive operations, including parallel tool calls where each action can be individually approved/rejected. Works across both main agents and SubAgents — interrupts propagate up to the parent for approval and resume seamlessly

- Composable Execution Modes - Agent run loops are explicit Elixir pipelines built from reusable steps. Mix and match built-in steps (

call_llm,execute_tools,check_pre_tool_hitl,propagate_state, etc.) or write your own. Different agents can use different modes in the same application - Structured Agent Completion (

until_tool) - Force agents to loop until they call a specific tool, returning the result as a clean{:ok, state, %ToolResult{}}tuple. No more hoping the LLM follows your output format — get structured data you can pattern match on - SubAgents - Delegate complex tasks to specialized child agents for efficient context management and parallel execution

- GenServer Architecture - Each agent runs as a supervised OTP process with automatic lifecycle management

- Phoenix.Presence Integration - Smart resource management that knows when to shut down idle agents

- PubSub Real-Time Events - Stream agent state, messages, and events to multiple LiveView subscribers

- Middleware System - Extensible plugin architecture for adding capabilities to agents, including composable observability callbacks for OpenTelemetry, metrics, or custom logging

- Cluster-Aware Distribution - Optional Horde-based distribution for running agents across a cluster of nodes with automatic state migration, or run locally on a single node (the default)

- State Persistence - Save and restore agent conversations via optional behaviour modules for agent state and display messages

- Virtual Filesystem - Isolated, in-memory file operations with optional persistence

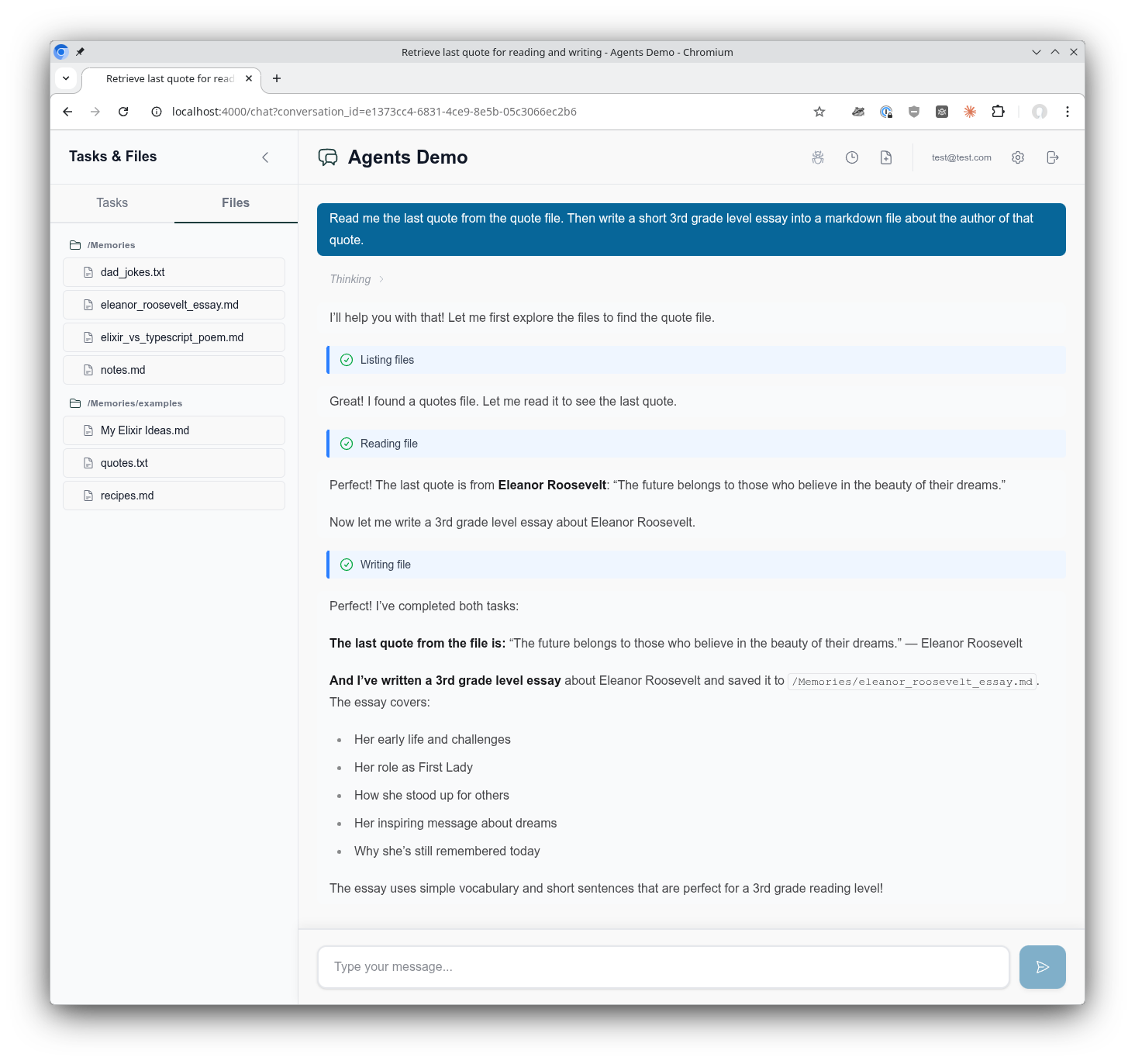

See it in action! Try the agents_demo application to experience Sagents interactively, or add the sagents_live_debugger to your app for real-time insights into agent configuration, state, and event flows.

The AgentsDemo chat interface showing the use of a virtual filesystem, tool call execution, composable middleware, supervised Agentic GenServer assistant, and much more!

Sagents is designed for Elixir developers building interactive AI applications where:

- Users have real-time conversations with AI agents

- Human oversight is required for certain operations (file deletes, API calls, etc.)

- Multiple concurrent conversations need isolated agent processes

- Agent state must persist across sessions

- Real-time UI updates are essential (Phoenix LiveView)

If you're building a simple CLI tool or batch processing pipeline, the core LangChain library may be sufficient. Sagents adds the orchestration layer needed for production interactive applications.

What about non-interactive agents? Certainly! Sagents works perfectly well for background agents without a UI. You'd simply skip the UI state management helpers and omit middleware like HumanInTheLoop. The agent still runs as a supervised GenServer with all the benefits of state persistence, middleware capabilities, and SubAgent delegation. The sagents_live_debugger package remains valuable for gaining visibility into what your agents are doing, even without an end-user interface.

Add sagents to your list of dependencies in mix.exs:

def deps do

[

{:sagents, "~> 0.5.0"}

]

endLangChain is automatically included as a dependency.

Add Sagents.Supervisor to your application's supervision tree:

# lib/my_app/application.ex

children = [

# ... your other children (Repo, PubSub, etc.)

Sagents.Supervisor

]This starts the process registry, dynamic supervisors, and filesystem supervisor that Sagents uses to manage agents.

Sagents builds on the Elixir LangChain library for LLM integration. To use Sagents, you need to configure an LLM provider by setting the appropriate API key as an environment variable:

# For Anthropic (Claude)

export ANTHROPIC_API_KEY="your-api-key"

# For OpenAI (GPT)

export OPENAI_API_KEY="your-api-key"

# For Google (Gemini)

export GOOGLE_API_KEY="your-api-key"Then specify the model when creating your agent:

# Anthropic Claude

alias LangChain.ChatModels.ChatAnthropic

model = ChatAnthropic.new!(%{model: "claude-sonnet-4-5-20250929"})

# OpenAI GPT

alias LangChain.ChatModels.ChatOpenAI

model = ChatOpenAI.new!(%{model: "gpt-4o"})

# Google Gemini

alias LangChain.ChatModels.ChatGoogleAI

model = ChatGoogleAI.new!(%{model: "gemini-2.0-flash-exp"})For detailed configuration options, start here LangChain documentation.

alias Sagents.{Agent, AgentServer, State}

alias Sagents.Middleware.{TodoList, FileSystem, HumanInTheLoop}

alias LangChain.ChatModels.ChatAnthropic

alias LangChain.Message

# Create agent with middleware capabilities

{:ok, agent} = Agent.new(%{

agent_id: "my-agent-1",

model: ChatAnthropic.new!(%{model: "claude-sonnet-4-5-20250929"}),

base_system_prompt: "You are a helpful coding assistant.",

middleware: [

TodoList,

FileSystem,

{HumanInTheLoop, [

interrupt_on: %{

"write_file" => true,

"delete_file" => true

}

]}

]

})# Create initial state

state = State.new!(%{

messages: [Message.new_user!("Create a hello world program")]

})

# Start the AgentServer (runs as a supervised GenServer)

{:ok, _pid} = AgentServer.start_link(

agent: agent,

initial_state: state,

pubsub: {Phoenix.PubSub, :my_app_pubsub},

inactivity_timeout: 3_600_000 # 1 hour

)

# Subscribe to real-time events

AgentServer.subscribe("my-agent-1")

# Execute the agent

:ok = AgentServer.execute("my-agent-1")# In your LiveView or GenServer

def handle_info({:agent, event}, socket) do

case event do

{:status_changed, :running, nil} ->

# Agent started processing

{:noreply, assign(socket, status: :running)}

{:llm_deltas, deltas} ->

# Streaming tokens received

{:noreply, stream_tokens(socket, deltas)}

{:llm_message, message} ->

# Complete message received

{:noreply, add_message(socket, message)}

{:todos_updated, todos} ->

# Agent's TODO list changed

{:noreply, assign(socket, todos: todos)}

{:status_changed, :interrupted, interrupt_data} ->

# Human approval needed

{:noreply, show_approval_dialog(socket, interrupt_data)}

{:status_changed, :idle, nil} ->

# Agent completed

{:noreply, assign(socket, status: :idle)}

{:agent_shutdown, metadata} ->

# Agent shutting down (inactivity or no viewers)

{:noreply, handle_shutdown(socket, metadata)}

end

end# When agent needs approval, it returns interrupt data

# User reviews and provides decisions

decisions = [

%{type: :approve}, # Approve first tool call

%{type: :edit, arguments: %{"path" => "safe.txt"}}, # Edit second tool call

%{type: :reject} # Reject third tool call

]

# Resume execution with decisions

:ok = AgentServer.resume("my-agent-1", decisions)Force an agent to return structured output by calling a specific tool:

# Agent loops until "deliver_answer" is called, then returns the tool result

case Agent.execute(agent, state, until_tool: "deliver_answer") do

{:ok, final_state, %ToolResult{} = result} ->

# Structured data from the target tool

IO.inspect(result.content)

{:error, reason} ->

# LLM stopped without calling the target tool

IO.puts("Error: #{inspect(reason)}")

endThis works through HITL interrupt/resume cycles and with SubAgents — the contract is enforced at every level.

Sagents includes several pre-built middleware components:

| Middleware | Description |

|---|---|

| TodoList | Task management with write_todos tool for tracking multi-step work |

| FileSystem | Virtual filesystem with ls, read_file, write_file, edit_file, find_in_file, edit_lines, delete_file |

| HumanInTheLoop | Pause execution for human approval on configurable tools |

| SubAgent | Delegate tasks to specialized child agents for parallel execution |

| Summarization | Automatic conversation compression when token limits approach |

| PatchToolCalls | Fix dangling tool calls from interrupted conversations |

| ConversationTitle | Auto-generate conversation titles from first user message |

| DebugLog | Local dev tool — writes per-conversation structured logs (messages, tool calls, state changes) to dedicated files for debugging |

{:ok, agent} = Agent.new(%{

# ...

middleware: [

{FileSystem, [

enabled_tools: ["ls", "read_file", "write_file", "edit_file"],

# Optional: persistence callbacks

persistence: MyApp.FilePersistence,

context: %{user_id: current_user.id}

]}

]

})SubAgents provide efficient context management by isolating complex tasks:

{:ok, agent} = Agent.new(%{

# ...

middleware: [

{SubAgent, [

model: ChatAnthropic.new!(%{model: "claude-sonnet-4-5-20250929"}),

subagents: [

SubAgent.Config.new!(%{

name: "researcher",

description: "Research topics using web search",

system_prompt: "You are an expert researcher...",

tools: [web_search_tool]

}),

SubAgent.Compiled.new!(%{

name: "coder",

description: "Write and review code",

agent: pre_built_coder_agent

})

],

# Prevent recursive SubAgent nesting

block_middleware: [ConversationTitle, Summarization]

]}

]

})SubAgents also respect HITL permissions - if a SubAgent attempts a protected operation, the interrupt propagates to the parent for approval.

Configure which tools require human approval:

{HumanInTheLoop, [

interrupt_on: %{

# Simple boolean

"write_file" => true,

"delete_file" => true,

# Advanced: customize allowed decisions

"execute_command" => %{

allowed_decisions: [:approve, :reject] # No edit option

}

}

]}Decision types:

:approve- Execute with original arguments:edit- Execute with modified arguments:reject- Skip execution, inform agent of rejection

Create your own middleware by implementing the Sagents.Middleware behaviour:

defmodule MyApp.CustomMiddleware do

@behaviour Sagents.Middleware

@impl true

def init(opts) do

config = %{

enabled: Keyword.get(opts, :enabled, true)

}

{:ok, config}

end

@impl true

def system_prompt(_config) do

"You have access to custom capabilities."

end

@impl true

def tools(config) do

[my_custom_tool(config)]

end

@impl true

def before_model(state, _config) do

# Preprocess state before LLM call

{:ok, state}

end

@impl true

def after_model(state, _config) do

# Postprocess state after LLM response

# Return {:interrupt, state, interrupt_data} to pause for HITL

{:ok, state}

end

@impl true

def handle_message(message, state, _config) do

# Handle async messages from spawned tasks

{:ok, state}

end

@impl true

def on_server_start(state, _config) do

# Called when AgentServer starts - broadcast initial state

{:ok, state}

end

endAgent execution in Sagents is an explicit pipeline, not a black box. The default mode composes built-in steps from both LangChain and Sagents:

# This is the entire default run loop (Sagents.Modes.AgentExecution)

defp do_run(chain, opts) do

{:continue, chain}

|> call_llm()

|> check_max_runs(Keyword.put_new(opts, :max_runs, 50))

|> check_pause(opts)

|> check_pre_tool_hitl(opts)

|> execute_tools()

|> propagate_state(opts)

|> check_tool_interrupts(opts)

|> maybe_check_until_tool(opts)

|> continue_or_done_safe(&do_run/2, opts)

endEvery step follows a simple contract: {:continue, chain} means keep going, any other tuple (:ok, :error, :interrupt, :pause) is terminal and passes through unchanged. Write your own steps using the same pattern and drop them into the pipeline.

defmodule MyApp.Modes.Simple do

@behaviour LangChain.Chains.LLMChain.Mode

import LangChain.Chains.LLMChain.Mode.Steps

@impl true

def run(chain, opts) do

chain = ensure_mode_state(chain)

{:continue, chain}

|> call_llm()

|> execute_tools()

|> check_max_runs(opts)

|> continue_or_done(&run/2, opts)

end

endAssign a mode per agent — one agent can be a strict tool-caller, another fully conversational:

{:ok, agent} = Agent.new(%{

model: model,

mode: MyApp.Modes.Simple,

# ...

})| Step | Source | What it does |

|---|---|---|

call_llm() |

LangChain | Single LLM call, tracks run count |

execute_tools() |

LangChain | Execute pending tool calls |

check_max_runs(opts) |

LangChain | Safety limit on LLM calls |

check_pause(opts) |

LangChain | Infrastructure drain / node migration |

check_until_tool(opts) |

LangChain | Terminate when target tool is called |

check_tool_interrupts(opts) |

LangChain | Detect tool-level interrupts |

continue_or_done(run_fn, opts) |

LangChain | Loop or return |

check_pre_tool_hitl(opts) |

Sagents | HITL approval check before tool execution |

propagate_state(opts) |

Sagents | Merge tool result state deltas back |

continue_or_done_safe(run_fn, opts) |

Sagents | Loop or return, with until_tool enforcement |

Sagents provides generators to scaffold everything you need for conversation-centric agents:

mix sagents.setup MyApp.Conversations \

--scope MyApp.Accounts.Scope \

--owner-type user \

--owner-field user_idThis generates:

- Persistence layer - Database schemas and migration

- Factory module - Agent creation with model/middleware configuration

- Coordinator module - Session management and lifecycle orchestration

All configured to work together seamlessly based on your --owner-type and --owner-field settings.

The mix sagents.setup command creates a complete conversation infrastructure:

- Context module (

MyApp.Conversations) with CRUD operations - Schemas: Conversation, AgentState, DisplayMessage

- Database migration for all tables

Centralizes agent creation at MyApp.Agents.Factory with:

- Model configuration (ChatAnthropic by default, with fallback examples)

- Default middleware stack (TodoList, FileSystem, SubAgent, Summarization, etc.)

- Human-in-the-Loop configuration

- Automatic filesystem scope extraction based on your owner type/field

Key functions to customize:

get_model_config/0- Change LLM provider (OpenAI, Ollama, etc.)get_fallback_models/0- Configure model fallbacks for resiliencebase_system_prompt/0- Define your agent's personality and capabilitiesbuild_middleware/3- Add/remove middleware from the stackdefault_interrupt_on/0- Configure which tools require human approvalget_filesystem_scope/1- Customize filesystem scoping strategy

Manages agent lifecycles at MyApp.Agents.Coordinator with:

- Conversation ID → Agent ID mapping

- On-demand agent starting with idempotent session management

- State loading from your Conversations context

- Race condition handling for concurrent starts

- Phoenix.Presence integration for viewer tracking

Key functions to customize:

conversation_agent_id/1- Change the agent_id mapping strategycreate_conversation_state/1- Customize state loading behavior

For Phoenix LiveView integration, generate a helpers module with reusable handlers for all agent events:

mix sagents.gen.live_helpers MyAppWeb.AgentLiveHelpers \

--context MyApp.ConversationsThis generates a module with handler functions that follow the LiveView socket-in/socket-out pattern:

- Status handlers -

handle_status_running/1,handle_status_idle/1,handle_status_cancelled/1,handle_status_error/2,handle_status_interrupted/2 - Message handlers -

handle_llm_deltas/2,handle_llm_message_complete/1,handle_display_message_saved/2 - Tool execution handlers -

handle_tool_call_identified/2,handle_tool_execution_started/2,handle_tool_execution_completed/3,handle_tool_execution_failed/3 - Lifecycle handlers -

handle_conversation_title_generated/3,handle_agent_shutdown/2 - Core helpers -

persist_agent_state/2,reload_messages_from_db/1,update_streaming_message/2

Use them in your LiveView:

defmodule MyAppWeb.ChatLive do

alias MyAppWeb.AgentLiveHelpers

def handle_info({:agent, {:status_changed, :running, nil}}, socket) do

{:noreply, AgentLiveHelpers.handle_status_running(socket)}

end

def handle_info({:agent, {:llm_deltas, deltas}}, socket) do

{:noreply, AgentLiveHelpers.handle_llm_deltas(socket, deltas)}

end

def handle_info({:agent, {:status_changed, :idle, _data}}, socket) do

{:noreply, AgentLiveHelpers.handle_status_idle(socket)}

end

endOptions:

--context(required) - Your conversations context module--test-path- Custom test file directory (default: inferred from module path)--no-test- Skip generating the test file

mix sagents.setup MyApp.Conversations \

--scope MyApp.Accounts.Scope \

--owner-type user \

--owner-field user_id \

--factory MyApp.Agents.Factory \

--coordinator MyApp.Agents.Coordinator \

--pubsub MyApp.PubSub \

--presence MyAppWeb.Presence \

--table-prefix sagents_All options have sensible defaults based on your context module and Phoenix conventions.

For a fully customized example, see the agents_demo project.

# Create conversation

{:ok, conversation} = Conversations.create_conversation(scope, %{title: "My Chat"})

# Save state during execution

state = AgentServer.export_state(agent_id)

Conversations.save_agent_state(conversation.id, state)

# Restore conversation later

{:ok, persisted_state} = Conversations.load_agent_state(conversation.id)

# Create agent from code (middleware/tools come from code, not database)

{:ok, agent} = MyApp.AgentFactory.create_agent(agent_id: "conv-#{conversation.id}")

# Start with restored state

{:ok, pid} = AgentServer.start_link_from_state(

persisted_state,

agent: agent,

agent_id: "conv-#{conversation.id}",

pubsub: {Phoenix.PubSub, :my_pubsub}

)Sagents uses a flexible supervision architecture built on OTP principles. Process discovery is handled by Sagents.ProcessRegistry, which abstracts over Registry (local) and Horde.Registry (distributed) based on your distribution config:

Sagents.Supervisor (added to your application's supervision tree)

├── Sagents.ProcessRegistry (Registry or Horde.Registry)

│ └── Process Discovery via Registry Keys:

│ ├── {:agent_supervisor, agent_id}

│ ├── {:agent_server, agent_id}

│ ├── {:sub_agents_supervisor, agent_id}

│ └── {:filesystem_server, scope_key}

│

├── Sagents.ProcessSupervisor (DynamicSupervisor or Horde.DynamicSupervisor)

│ ├── AgentSupervisor ("conversation-1")

│ │ ├── AgentServer (registers as {:agent_server, "conversation-1"})

│ │ │ └── Broadcasts on topic: "agent_server:conversation-1"

│ │ └── SubAgentsDynamicSupervisor

│ │ └── SubAgentServer (temporary child agents)

│ │

│ └── AgentSupervisor ("conversation-2")

│ ├── AgentServer

│ └── SubAgentsDynamicSupervisor

│

└── FileSystemSupervisor (independent, flexible scoping)

├── FileSystemServer ({:user, 1}) # User-scoped

├── FileSystemServer ({:user, 2})

└── FileSystemServer ({:project, 42}) # Project-scoped

Registry-Based Discovery: All processes register with Sagents.ProcessRegistry using structured tuple keys. Process lookup happens through the registry, not supervision tree traversal. In local mode this uses Registry; in distributed mode it uses Horde.Registry for cluster-wide discovery.

Dynamic Agent Lifecycle: AgentSupervisor instances are started on-demand by the Coordinator via AgentSupervisor.start_link_sync/1. The _sync variant waits for full registration before returning, preventing race conditions when immediately subscribing to agent events.

Independent Filesystem Scoping: FileSystemSupervisor is separate from agent supervision, allowing flexible lifetime and scope management:

- User-scoped filesystem shared across multiple conversations

- Project-scoped filesystem shared across multiple users

- Organization-scoped filesystem for team collaboration

- Agents reference filesystems by

scope_key, not PID

Supervision Strategy: Each AgentSupervisor uses :rest_for_one strategy:

- If AgentServer crashes → SubAgentsDynamicSupervisor restarts

- If SubAgentsDynamicSupervisor crashes → only it restarts

- All children use

restart: :temporary(no automatic restart)

Agents automatically shut down after inactivity:

AgentServer.start_link(

agent: agent,

inactivity_timeout: 3_600_000 # 1 hour (default: 5 minutes)

# or nil/:infinity to disable

)