Every CVE in your AI stack is a credential leak waiting to happen. agent-bom follows the chain end-to-end and tells you exactly which fix collapses it.

CVE-2025-1234 (CRITICAL · CVSS 9.8 · CISA KEV)

|── better-sqlite3@9.0.0 (npm)

|── sqlite-mcp (MCP Server · unverified · root)

|── Cursor IDE (Agent · 4 servers · 12 tools)

|── ANTHROPIC_KEY, DB_URL, AWS_SECRET (Credentials exposed)

|── query_db, read_file, write_file, run_shell (Tools at risk)

Fix: upgrade better-sqlite3 → 11.7.0

Blast radius is the core idea: CVE -> package -> MCP server -> agent -> credentials -> tools. CWE-aware impact keeps a DoS from being reported like credential compromise.

agent-bom agents --demo --offlineThe demo uses a curated sample so the output stays reproducible across releases. Every CVE shown is a real OSV/GHSA match against a genuinely vulnerable package version — no fabricated findings (locked in by tests/test_demo_inventory_accuracy.py). For a real scan, run agent-bom agents, or add -p . to fold project manifests and lockfiles into the same result.

| Goal | Run | What you get |

|---|---|---|

| Find what is installed and reachable | agent-bom agents -p . |

Agent discovery, MCP mapping, project dependency findings, blast radius |

| Turn findings into a fix plan | agent-bom agents -p . --remediate remediation.md |

Prioritized remediation with fix versions and reachable impact |

| Check a package before install | agent-bom check flask@2.2.0 --ecosystem pypi |

Machine-readable pre-install verdict |

| Scan a container image | agent-bom image nginx:latest |

OS and package CVEs with fixability |

| Audit IaC or cloud posture | agent-bom iac Dockerfile k8s/ infra/main.tf |

Misconfigurations, manifest hardening, optional live cluster posture |

| Review findings in a persistent graph | agent-bom serve |

API plus bundled local UI on one machine; Kubernetes and Compose split the API image (agentbom/agent-bom) from the browser UI image (agentbom/agent-bom-ui) |

| Inspect live MCP traffic | agent-bom proxy "<server command>" |

Inline runtime inspection, detector chaining, response/argument review |

pip install agent-bom # CLI

# pipx install agent-bom # isolated global install

# uvx agent-bom --help # ephemeral run

agent-bom agents # discover + scan local AI agents and MCP servers

agent-bom agents -p . # add project lockfiles + manifests

agent-bom check flask@2.0.0 --ecosystem pypi # pre-install CVE gate

agent-bom image nginx:latest # container image scan

agent-bom iac Dockerfile k8s/ infra/main.tf # IaC scan, optionally `--k8s-live`After the first scan:

agent-bom agents -p . --remediate remediation.md # fix-first plan

agent-bom agents -p . --compliance-export fedramp -o evidence.zip # tamper-evident evidence bundle

pip install 'agent-bom[ui]' && agent-bom serve # API + bundled local UIThese come from the live product path, using the built-in demo data pushed through the API. See docs/CAPTURE.md for the canonical capture protocol.

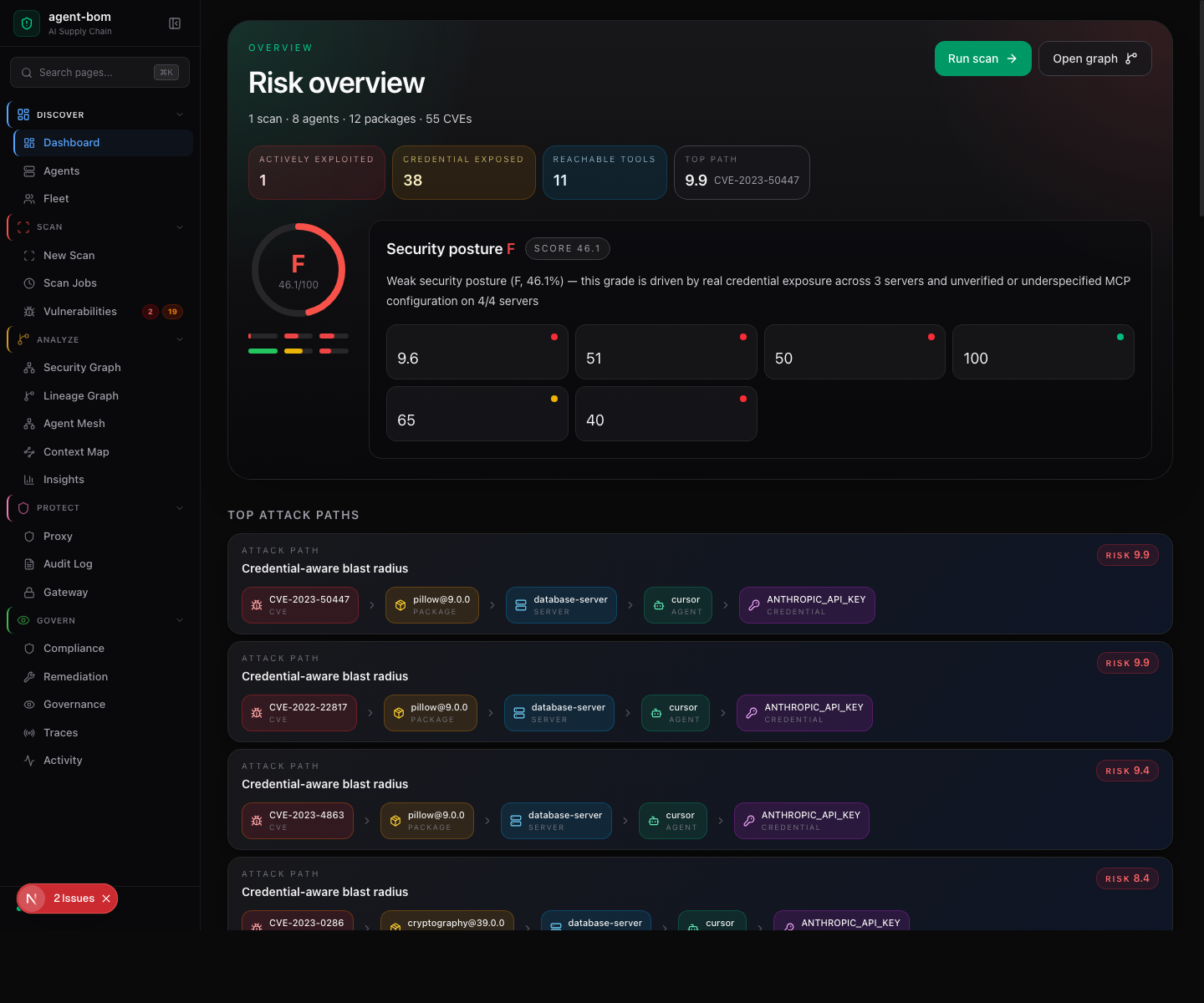

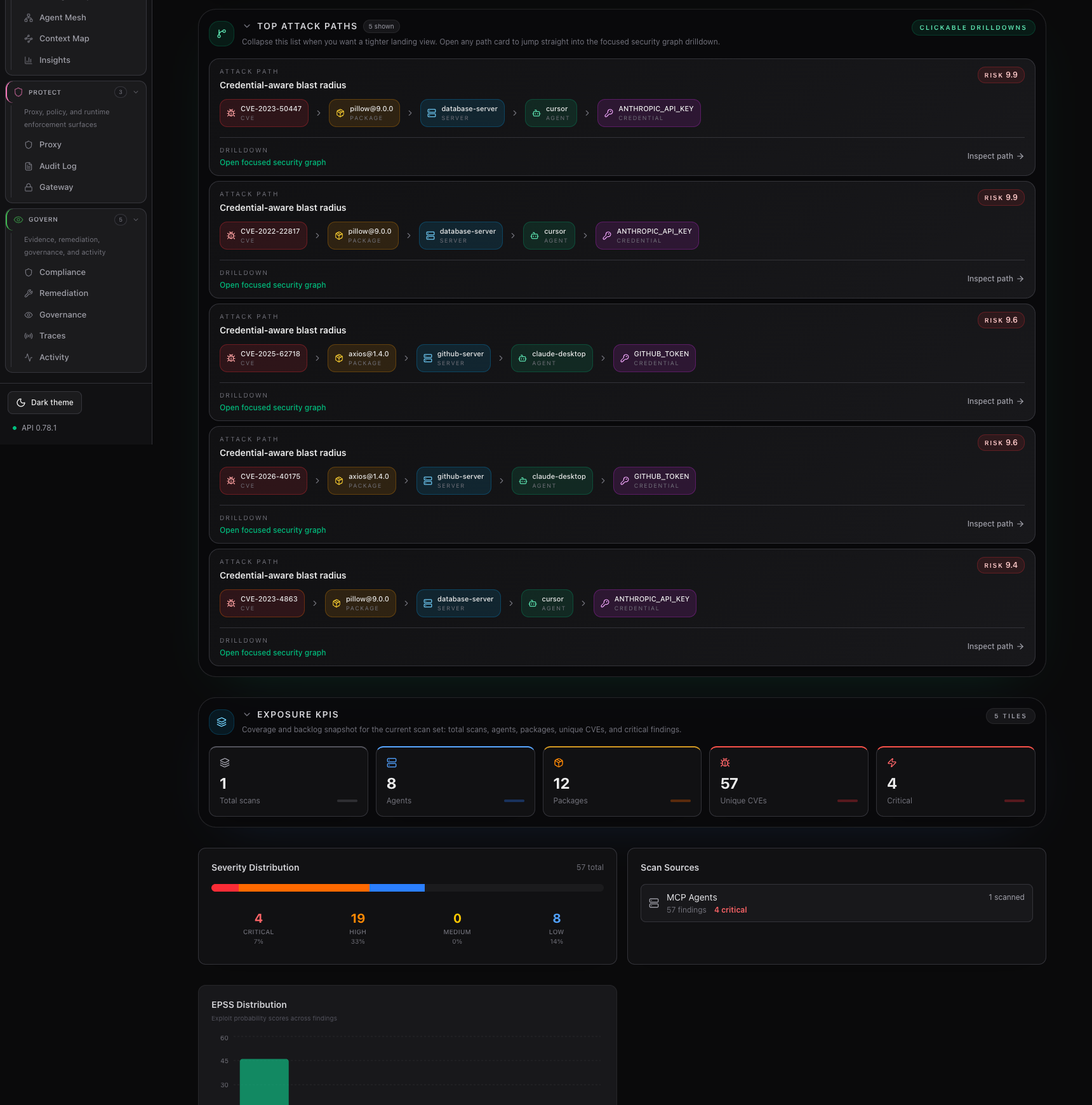

The landing page is the Risk overview: a letter-grade gauge, the four headline counters (actively exploited · credentials exposed · reachable tools · top attack-path risk), the security-posture grade with sub-scores (policy + controls, open evidence, packages + CVEs, reach + exposure, MCP configuration), and the score breakdown for each driver.

The second dashboard frame focuses on the fix-first path list and the coverage / backlog KPIs below it, so the attack-path drilldown stays readable without a tall stitched screenshot.

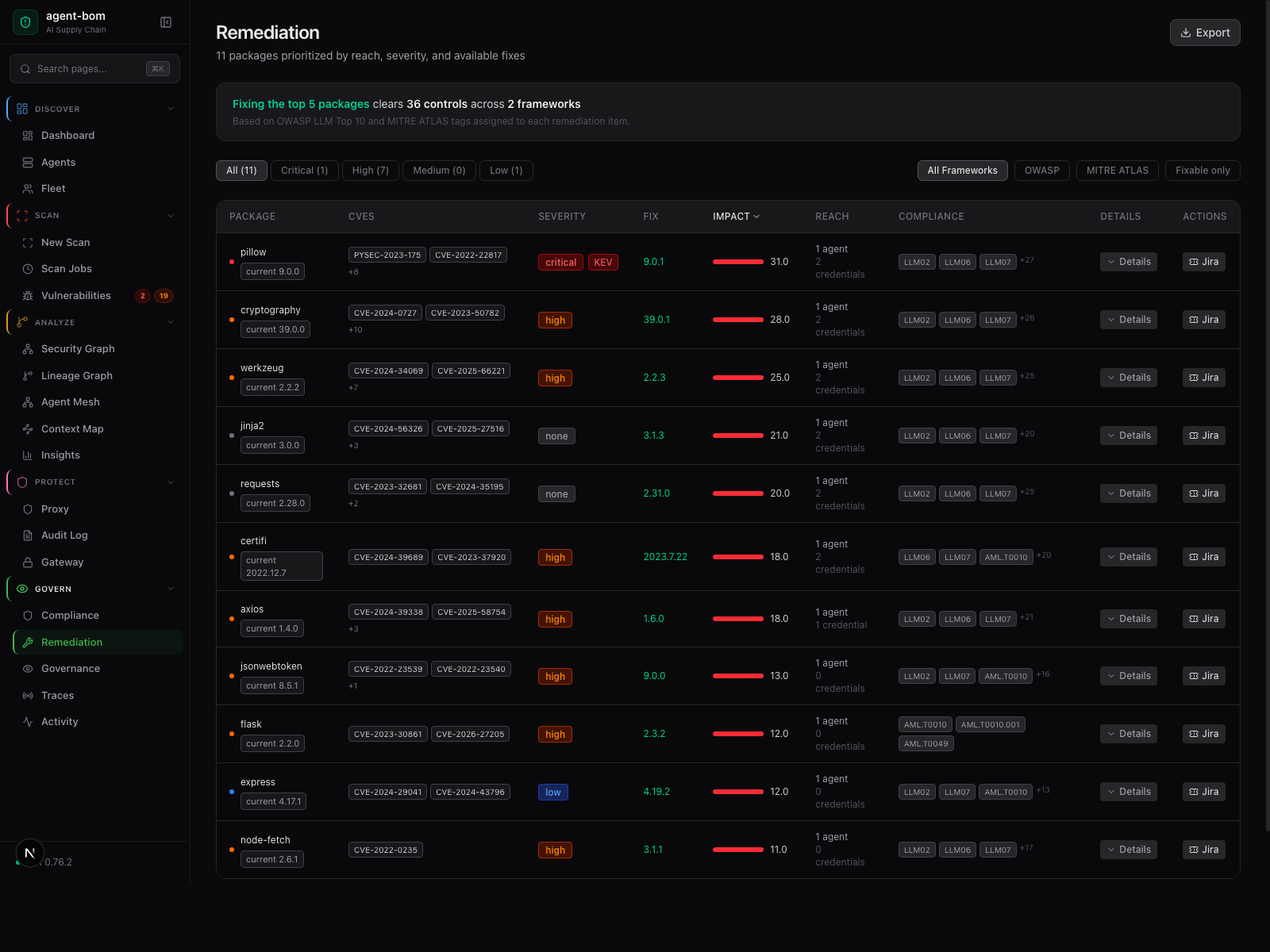

Risk, reach, fix version, and framework context in one review table — operators act without jumping between pages.

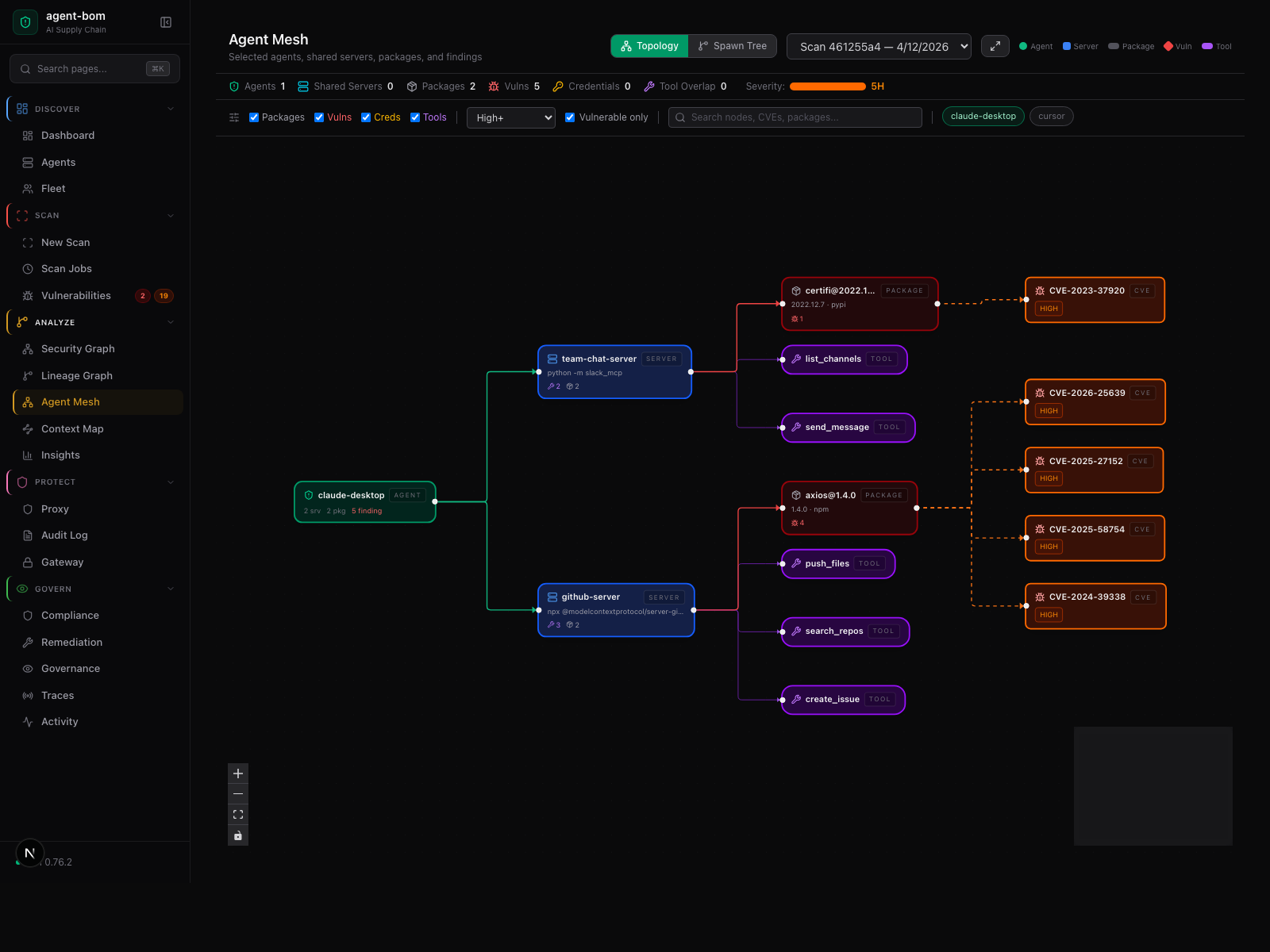

Agent-centered shared-infrastructure graph — selected agents, their shared MCP servers, tools, packages, and findings.

How a scan moves through the system — five stages, no source code or credentials leave your machine

Inside the engine: parsers, taint, call graph, blast-radius scoring.

External calls are limited to package metadata, version lookups, and CVE enrichment.

agent-bom runs end-to-end inside your infrastructure — your AWS account, your VPC, your EKS cluster, your Postgres / ClickHouse / Snowflake, your SSO, your KMS. No hosted control plane. No mandatory vendor backend. No telemetry.

This section is deployment-first: what runs in your infrastructure, what the

data path looks like, which stores hold state, and how a focused pilot narrows

that same architecture without inventing a different product. The detailed

rollout runbooks live under site-docs/deployment/.

Keep the deployment story split into two views:

- deployment topology: what runs in the customer's environment

- runtime MCP flow: how proxy, gateway, API, and upstream MCP calls interact

Everything agent-bom ships runs inside one trust boundary: the customer's VPC, EKS account, or self-managed cluster. The normal cross-boundary paths are inbound OIDC and outbound, policy-audited MCP upstream calls. Enrichment to OSV/NVD is optional and allow-listable.

| Layer | Lives in | Scales via | Talks to |

|---|---|---|---|

| Ingress + auth | ALB / Istio Gateway + OIDC | — | Corporate IdP (Okta / Entra / Google) |

| Runtime MCP plane | gateway + selected proxy sidecars / local wrappers |

HPA + PDB | Remote MCPs, /v1/proxy/audit |

| Control plane | api, ui, jobs, backup (Helm) |

HPA + CronJob | Data plane, OTEL, Prometheus |

| Data plane | Customer-owned Postgres (+ optional ClickHouse, S3) | Operator-managed | — |

| Platform glue | ExternalSecrets, ServiceMonitor, OTEL collector | Operator-managed | AWS Secrets Manager / Vault / Grafana |

flowchart TB

classDef ext fill:#0b1220,stroke:#475569,color:#cbd5e1,stroke-dasharray:3 3

classDef edge fill:#111827,stroke:#38bdf8,color:#e0f2fe

classDef ctrl fill:#0f172a,stroke:#6366f1,color:#e0e7ff

classDef run fill:#0f172a,stroke:#10b981,color:#d1fae5

classDef data fill:#0f172a,stroke:#f59e0b,color:#fef3c7

classDef ops fill:#0f172a,stroke:#64748b,color:#cbd5e1

Browser["Browser operators"]:::ext

IdP["Corporate IdP"]:::ext

CI["CI + scheduled scans"]:::ext

Remote["Remote MCPs"]:::ext

Intel["OSV / NVD / GHSA<br/>optional enrichment"]:::ext

subgraph Customer["Customer VPC / EKS / self-managed cluster"]

direction TB

Ingress["Ingress + TLS"]:::edge

subgraph Control["Control plane"]

direction LR

UI["UI<br/>same-origin browser app"]:::ctrl

API["API<br/>auth · findings · fleet · audit"]:::ctrl

Jobs["Workers<br/>CronJob / Job"]:::ctrl

Backup["Backup job"]:::ctrl

end

subgraph Runtime["Runtime MCP plane"]

direction LR

Proxy["Proxy<br/>sidecar or laptop wrapper"]:::run

Gateway["Gateway<br/>agent-bom gateway serve"]:::run

end

subgraph Data["Customer-owned data"]

direction LR

PG[("Postgres / Supabase")]:::data

CH[("ClickHouse optional")]:::data

S3[("S3 optional")]:::data

end

subgraph Platform["Platform services"]

direction LR

Secrets["ExternalSecrets / IRSA / Vault"]:::ops

Obs["OTEL + Prometheus"]:::ops

end

end

Browser --> Ingress

IdP -. OIDC .-> Ingress

Ingress --> UI

UI -->|same-origin API calls| API

CI --> Jobs

Jobs -->|results + inventory| API

Proxy -->|audited relay| Gateway

Gateway -->|POST /v1/proxy/audit| API

Gateway -->|policy-audited upstream| Remote

API --> PG

API -. optional analytics .-> CH

Backup --> S3

Secrets --> API

Secrets --> Gateway

API --> Obs

Gateway --> Obs

API -. optional egress .-> Intel

Deployment truth: the UI is not the collector. The browser drives workflows, the API owns control-plane state, workers do scans, and proxy plus gateway handle runtime MCP traffic. For the role split, see the Self-Hosted Product Architecture.

sequenceDiagram

participant Client as Developer or workload client

participant Proxy as agent-bom proxy

participant Gateway as agent-bom gateway

participant API as Control-plane API

participant Remote as Remote MCP

participant Store as Postgres / audit store

Client->>Proxy: MCP JSON-RPC (stdio / SSE / HTTP)

Proxy->>Proxy: local policy + runtime checks

Proxy->>Gateway: audited relay

Gateway->>API: policy fetch / POST /v1/proxy/audit

Gateway->>Remote: upstream MCP call

Remote-->>Gateway: MCP response

Gateway-->>Proxy: response + shared policy result

Proxy->>Proxy: optional VLD / OCR redaction

Proxy-->>Client: safe response

API->>Store: persist audit, findings, graph links

- The client talks to a local or sidecar

agent-bom proxy. - The proxy applies local runtime checks and relays to the central

agent-bom gateway. - The gateway evaluates shared policy, records audit to

/v1/proxy/audit, then calls the remote MCP upstream. - The response returns on the same path; image responses can run through the visual leak detector before the client sees them.

- The API persists audit, findings, and graph links for the UI, exports, and compliance surfaces.

| Owner | Owns | Touches agent-bom via |

|---|---|---|

| Security / platform team | Policy, fleet, remediation, gateway upstreams | Dashboard, API, Helm values |

| Developers + service owners | Local scans, CI gates, proxy config on their workload | CLI, GitHub Action, proxy sidecar |

| Platform / SRE | Cluster, ingress, secrets, observability | Helm chart, ExternalSecrets, ServiceMonitor |

flowchart LR

sec["Security / platform team"] --> ui["Dashboard + API<br/>policy · fleet · remediation"]

dev["Developers + service owners"] --> cli["CLI + CI gate + proxy sidecar"]

sre["Platform / SRE"] --> helm["Helm chart + secrets + observability"]

cli --> ui

helm --> ui

This is the architecture. A pilot is just a narrower rollout profile over the same surfaces and stores.

| Profile | Turn on first | Keep optional until needed |

|---|---|---|

| Local + CI/CD gate | CLI scans + GitHub Action + HTML/SARIF output | fleet, proxy, gateway, ClickHouse |

| Focused pilot | scan + fleet + proxy + API/UI | ClickHouse, Snowflake, full gateway rollout |

| Standard self-hosted | scan + fleet + proxy + gateway + API/UI | ClickHouse |

| Regulated / zero-trust | standard self-hosted + Istio/Kyverno/ExternalSecret | Snowflake |

The gateway closes the biggest deployment gap for remote MCP usage: one central URL in your EKS fronts N remote MCP upstreams, so laptops do not each need their own proxy config. See the multi-MCP gateway design and the focused EKS rollout.

| Surface | CLI / route | What it does | Runs as |

|---|---|---|---|

| scan | agent-bom agents, agent-bom image, agent-bom iac |

Discovery, inventory, CVE enrichment, blast-radius scoring | CLI + CronJob |

| CI/CD gate | GitHub Action uses: msaad00/agent-bom@v0.81.0 |

Pull-request and release gating, SARIF, policy-driven exits | GitHub Actions runner |

| fleet | POST /v1/fleet/sync + CLI --push-url |

Endpoint + collector fleet ingest with tenant scoping | API endpoint |

| proxy / runtime | agent-bom proxy (stdio) / --sse (HTTP) |

Inline MCP JSON-RPC inspection + policy enforcement | K8s sidecar or laptop wrapper |

| gateway | agent-bom gateway serve, /v1/gateway/policies, /v1/proxy/audit |

Central HTTP traffic plane plus shared policy/audit plane | Service + API routes |

| API + UI | /v1/* + Next.js dashboard |

Findings, graph, remediation, compliance, posture | 2 Deployments + HPA |

| OTEL / observability | POST /v1/traces, --otel-endpoint, API tracing |

W3C trace context, OTLP export, and OTEL trace ingest for runtime evidence | API route + CLI/runtime hooks |

By default, findings, fleet data, audit logs, graph state, and remediation outputs stay in your infrastructure. Optional egress (OSV lookups, NVD enrichment, Slack / Jira / Vanta / Drata webhooks, SIEM / OTLP) is operator-controlled.

agent-bom already treats OpenTelemetry as a real product surface, not a bolt-on:

- the API preserves W3C

traceparentcontext and can export request spans over OTLP/HTTP - the CLI can emit OTLP metrics and scan context to your collector with

--otel-endpoint - the control plane can ingest OTEL traces at

POST /v1/traces - runtime protection can consume OTEL traces as evidence, not just emit them

Policy is different. The shipped gateway and proxy use the repo's native JSON policy engine, not OPA/Rego. That is an intentional product choice documented in ADR-002: lower operator complexity, no extra OPA binary, and one policy model shared across scan, gateway, proxy, and runtime.

What makes sense today:

- promote OTEL as a first-class interoperability path

- keep the native policy engine as the default shipped control plane

- treat OPA/Rego as a future enterprise interop option, such as bundle import/export or an external decision hook, not as a replacement for the current engine

Pilot teams pick per workload:

agent-bom gateway serve— central multi-upstream HTTP gateway. One service in your EKS fronts N MCP upstreams (SaaS MCPs, Snowflake-hosted MCPs, in-cluster MCPs) and every laptop points at/mcp/{server-name}over HTTP/SSE. Fleet-driven auto-discovery via--from-control-planeso the upstream list comes from the scans your team already runs, not a blank YAML. Source:src/agent_bom/gateway_server.py, CLI:src/agent_bom/cli/_gateway.py, tests:tests/test_gateway_server.py.agent-bom proxy— per-MCP sidecar or stdio wrapper (proxy.py:527stdio,proxy.py:258HTTP/SSE). One instance per server. The honest mode for stdio-only MCPs and for workload-local enforcement where a shared traffic plane would hairpin.

Both modes pull the same gateway policy (/v1/gateway/policies) and push to the same audit sink (/v1/proxy/audit). Central control, edge enforcement, no hairpinning.

agent-bom does not treat every backend as interchangeable. Pick per capability — full detail in backend-parity.md.

| Capability | SQLite | Postgres / Supabase (default) | ClickHouse (analytics) | Snowflake (warehouse-native) |

|---|---|---|---|---|

| Scan jobs + fleet agents + gateway policies + audit log | ✓ | ✓ | n/a (not a transactional store) | ✓ |

| Exceptions, schedules, graph | ✓ (SQLite stores ship in repo) | ✓ | n/a | n/a (not yet ported) |

| API keys + trend store | Postgres-only | ✓ | n/a | n/a (not yet ported) |

| Row-level tenant isolation | ✓ | ✓ | ✓ | ✓ (governance-oriented) |

| High-volume OLAP / time-series | n/a | n/a | ✓ | ✓ (via Snowpark) |

| Best for | laptops, single-node | standard EKS pilot | audit + analytics at scale | you already live in Snowflake |

Source: src/agent_bom/api/store.py, postgres_store.py, clickhouse_store.py, snowflake_store.py. Parity roadmap: backend-parity.md.

Common deployment shapes:

- Pilot default — Postgres (or Supabase) control plane. Everything works, fastest install.

- Analytics-heavy — Postgres + ClickHouse. Postgres stays transactional; ClickHouse ingests the audit/event firehose.

- Snowflake-native (unified stack) — Snowflake as the primary and analytics store. Uses Hybrid Tables for transactional writes (scan / fleet / policy / audit), columnar tables for analytics, Snowpipe Streaming for real-time ingest, and the Postgres-compatible protocol where clients need it. Cross-cloud replication lets EKS read/write the same tables your Cortex MCPs read, regardless of region. Best when you already govern data there. See snowflake-backend.md.

Three shipped examples in deploy/helm/agent-bom/examples/:

| File | Shape | Use when |

|---|---|---|

eks-mcp-pilot-values.yaml |

Postgres + MCP-focused scanner CronJob + restricted ingress | Pilot scope, MCP + agents + fleet + proxy |

eks-production-values.yaml |

Postgres pool tuned + HPA + pod anti-affinity + PriorityClass | Production rollout |

eks-istio-kyverno-values.yaml |

Istio mTLS + Kyverno policy + PSA restricted | Regulated / zero-trust environments |

eks-snowflake-values.yaml |

Snowflake as primary backend via key-pair auth | You already govern data in Snowflake |

Most self-hosted teams start with the surfaces below. The focused pilot simply turns on a narrower subset first; it does not use a different architecture. Every one of them maps to code in this repo and ships today.

- scan — discovery, inventory, CVE, image, IaC, Kubernetes, cloud analysis (

src/agent_bom/cli/agents/) - CI/CD gate — GitHub Action packaging of the scan surface for pull-request and release workflows with SARIF output

- fleet — endpoint + collector inventory pushed into the control plane (

POST /v1/fleet/sync) - proxy / runtime — per-MCP sidecar or stdio wrapper — the honest mode for stdio MCPs and workload-local enforcement (

src/agent_bom/proxy.py) - gateway — two things, same namespace:

- central policy + audit plane (

/v1/gateway/*) that every enforcement point pulls + pushes (src/agent_bom/api/routes/gateway.py) - central HTTP traffic plane (

agent-bom gateway serve) that fronts N remote MCP upstreams behind one URL with fleet-driven auto-discovery, bearer + OAuth2 client-credentials auth injection, inlinecheck_policy, and audit push (src/agent_bom/gateway_server.py,src/agent_bom/cli/_gateway.py)

- central policy + audit plane (

- API + UI — operator plane for findings, graph, remediation, audit, policy, compliance (

src/agent_bom/api/server.py,ui/)

flowchart LR

clients["Cursor · Claude · VS Code<br/>Codex · Cortex · Continue"]

cli["agent-bom agents --push"]

prx["agent-bom proxy <mcp>"]

cp(["agent-bom control plane<br/>in your EKS cluster"])

clients -.-> cli

clients -.-> prx

cli -->|HTTPS push| cp

prx -->|policy pull · audit push| cp

The Helm chart installs a single namespace with the control plane, its backup job, and the operator surface. Selected MCP workloads run alongside with an agent-bom-proxy sidecar that pulls gateway policy and pushes audit events back.

flowchart TB

subgraph ns["namespace: agent-bom"]

direction TB

api["Deployment: agent-bom-api<br/>3 replicas · HPA · /readyz drain"]

ui["Deployment: agent-bom-ui<br/>2 replicas"]

cron["CronJob: controlplane-backup<br/>pg_dump → S3 (SSE-KMS)"]

es[("ExternalSecret<br/>API keys · HMAC key · DB URL")]

obs["PrometheusRule + Grafana dashboard ConfigMap"]

end

subgraph work["Selected MCP workloads (same or adjacent ns)"]

direction LR

mcpsvc["MCP server pod"]

proxy["Sidecar: agent-bom-proxy"]

mcpsvc -.- proxy

end

api --- ui

api --- es

api -. scrape / alert .- obs

api --- cron

proxy -->|policy pull · audit push| api

Outside the namespace but in your VPC: Postgres (primary state), ClickHouse (optional analytics), External Secrets wired to KMS, and Prometheus + Grafana + OTel scraping the API. The restore round-trip is exercised in CI (backup-restore.yml).

flowchart TB

REQ([HTTP request])

BODY[Body size + read timeout]

TRACE[Trust headers + W3C trace]

AUTH["Auth — API key · OIDC · SAML"]

RBAC[RBAC role check]

TENANT[Tenant context propagation]

QUOTA[Tenant quota + rate limit]

ROUTE[Route handler]

AUDIT[(HMAC audit log)]

STORE[(Postgres · ClickHouse · Snowflake<br/>KMS at rest)]

REQ --> BODY --> TRACE --> AUTH --> RBAC --> TENANT --> QUOTA --> ROUTE

ROUTE --> AUDIT

ROUTE --> STORE

Every layer is testable on its own; failures emit Prometheus metrics. Operators introspect a live request via GET /v1/auth/debug and see rotation status via GET /v1/auth/policy.

Inside the control plane: OIDC + SAML SSO with RBAC, enforced API-key rotation policy, tenant-scoped quotas + rate limits, HMAC-chained audit log with signed export, KMS-encrypted Postgres backups with a verified restore round-trip in CI (backup-restore.yml), and signed compliance evidence bundles with Ed25519 asymmetric signing (/v1/compliance/{framework}/report — key pinned via /v1/compliance/verification-key, verification cookbook at docs/COMPLIANCE_SIGNING.md).

Pilot teams run:

# 1. Pick your backend shape (postgres default; snowflake / istio / production also shipped)

helm install agent-bom oci://ghcr.io/msaad00/charts/agent-bom \

--version 0.81.0 \

-n agent-bom --create-namespace \

-f deploy/helm/agent-bom/examples/eks-mcp-pilot-values.yaml

# 2. Smoke-test the install end-to-end — health + auth + fleet + scan + evidence bundle

kubectl -n agent-bom port-forward svc/agent-bom-api 8080:8080 &

./scripts/pilot-verify.sh http://localhost:8080 "$API_KEY"

# 3. Sync endpoint fleet

agent-bom agents --preset enterprise --introspect \

--push-url https://agent-bom.example.com/v1/fleet/sync

# 4. Wrap one MCP server with the runtime proxy (per-MCP today — see roadmap note above)

agent-bom proxy --policy ./policy.json -- <editor-mcp-command>

# 5. Pull an auditor-ready evidence bundle

curl -sD headers.txt -o soc2.json \

"https://agent-bom.example.com/v1/compliance/soc2/report" \

-H "Authorization: Bearer $API_KEY"See docs/ENTERPRISE_SECURITY_PLAYBOOK.md for the full enterprise trust story — every capability mapped to a code path and a test, with the scripted EKS pilot install at the end. Also: site-docs/deployment/eks-mcp-pilot.md for the focused pilot runbook and docs/COMPLIANCE_SIGNING.md for offline signature verification.

Operator guides by scenario:

| Scenario | Guide |

|---|---|

| Enterprise trust story (start here for pilots) | ENTERPRISE_SECURITY_PLAYBOOK.md |

| Own AWS / EKS end-to-end | own-infra-eks.md |

| Enterprise pilot scope | enterprise-pilot.md |

| Focused EKS MCP pilot | eks-mcp-pilot.md |

| Endpoint fleet on laptops | endpoint-fleet.md |

| Snowflake-native backend | snowflake-backend.md |

| Istio + Kyverno zero-trust | kubernetes.md |

| Backend parity matrix | backend-parity.md |

| Grafana dashboards | grafana.md |

| SIEM / OCSF integration | siem-integration.md |

| Metrics catalog + SLOs | OBSERVABILITY_METRICS.md |

| Performance + sizing | performance-and-sizing.md |

Self-hosted SSO uses OIDC or SAML; SAML admins fetch SP metadata at /v1/auth/saml/metadata. Control-plane API keys follow an enforced lifetime policy (AGENT_BOM_API_KEY_DEFAULT_TTL_SECONDS, AGENT_BOM_API_KEY_MAX_TTL_SECONDS); rotate in place at /v1/auth/keys/{key_id}/rotate.

agent-bom is a read-only scanner. It never writes configs, never executes MCP servers, never stores credential values. No telemetry. No analytics. Releases are Sigstore-signed with SLSA provenance and self-published SBOMs.

| When | What's sent | Where | Opt out |

|---|---|---|---|

| Default CVE lookups | Package names + versions | OSV API | --offline |

| Floating version resolution | Names + requested version | npm / PyPI / Go proxy | --offline |

--enrich |

CVE IDs | NVD, EPSS, CISA KEV | omit --enrich |

--deps-dev |

Package names + versions | deps.dev | omit --deps-dev |

verify |

Package + version | PyPI / npm integrity endpoints | don't run verify |

| Optional integrations | Finding summaries | Slack / Jira / Vanta / Drata | don't pass those flags |

Full trust model: SECURITY_ARCHITECTURE.md · PERMISSIONS.md · SUPPLY_CHAIN.md · RELEASE_VERIFICATION.md.

Bundled mappings for FedRAMP, CMMC, NIST AI RMF, ISO 27001, SOC 2, OWASP LLM Top-10, MITRE ATLAS, and EU AI Act. Export tamper-evident evidence packets in one command.

agent-bom agents -p . --compliance-export fedramp -o fedramp-evidence.zip

agent-bom agents -p . --compliance-export nist-ai-rmf -o evidence.zipThe audit log itself is HMAC-chained and exportable as a signed JSON/JSONL bundle at GET /v1/audit/export.

pip install agent-bom # CLI

docker run --rm agentbom/agent-bom agents # DockerFor published containers, the split is:

agentbom/agent-bom= the main runtime image for CLI, API, jobs, gateway, proxy-related entrypoints, and MCP server modeagentbom/agent-bom-ui= the standalone browser UI image used when the self-hosted control plane runs the UI separately from the API

| Mode | Best for |

|---|---|

CLI (agent-bom agents) |

local audit + project scan |

Endpoint fleet (--push-url …/v1/fleet/sync) |

employee laptops pushing into self-hosted fleet |

GitHub Action (uses: msaad00/agent-bom@v0.81.0) |

CI/CD + SARIF |

Docker (agentbom/agent-bom) |

isolated scans, API jobs, and non-browser self-hosted entrypoints |

Browser UI image (agentbom/agent-bom-ui) |

the separate Next.js UI container paired with a self-hosted API |

Kubernetes / Helm (helm install agent-bom deploy/helm/agent-bom) |

self-hosted API + dashboard, scheduled discovery |

REST API (agent-bom api) |

platform integration, self-hosted control plane |

MCP server (agent-bom mcp server) |

Claude Desktop, Claude Code, Cursor, Codex, Windsurf, Cortex |

Runtime proxy (agent-bom proxy) |

MCP traffic enforcement |

Shield SDK (from agent_bom.shield import Shield) |

in-process protection |

Backend choices stay explicit and optional:

SQLitefor local and single-node usePostgres/Supabasefor the primary transactional control planeClickHousefor analytics and event-scale persistenceSnowflakefor warehouse-native governance and selected backend paths

Run locally, in CI, in Docker, in Kubernetes, as a self-hosted API + dashboard, or as an MCP server — no mandatory hosted control plane, no mandatory cloud vendor.

References: PRODUCT_BRIEF.md · PRODUCT_METRICS.md · ENTERPRISE.md · How agent-bom works.

CI/CD in 60 seconds

- uses: msaad00/agent-bom@v0.81.0

with:

scan-type: scan

severity-threshold: high

upload-sarif: true

enrich: true

fail-on-kev: trueContainer image gate, IaC gate, air-gapped CI, MCP scan, and the SARIF / SBOM examples are documented in site-docs/getting-started/quickstart.md.

36 security tools available inside any MCP-compatible AI assistant:

{

"mcpServers": {

"agent-bom": {

"command": "uvx",

"args": ["agent-bom", "mcp", "server"]

}

}

}Also on Glama, Smithery, MCP Registry, and OpenClaw.

Install extras + output formats

| Extra | Command |

|---|---|

| Cloud providers | pip install 'agent-bom[cloud]' |

| MCP server | pip install 'agent-bom[mcp-server]' |

| REST API | pip install 'agent-bom[api]' |

| Dashboard | pip install 'agent-bom[ui]' |

| SAML SSO | pip install 'agent-bom[saml]' |

JSON · SARIF · CycloneDX 1.6 (with ML BOM) · SPDX 3.0 · HTML · Graph JSON · Graph HTML · GraphML · Neo4j Cypher · JUnit XML · CSV · Markdown · Mermaid · SVG · Prometheus · Badge · Attack Flow · plain text. OCSF is used for runtime / SIEM event delivery, not as a general report format.

git clone https://github.com/msaad00/agent-bom.git && cd agent-bom

pip install -e ".[dev-all]"

pytest && ruff check src/CONTRIBUTING.md · docs/CLI_DEBUG_GUIDE.md · SECURITY.md · CODE_OF_CONDUCT.md

Apache 2.0 — LICENSE

Open security scanner for AI supply chain — agents, MCP servers, packages, containers, cloud, GPU, and runtime.

Open security scanner for AI supply chain — agents, MCP servers, packages, containers, cloud, GPU, and runtime.